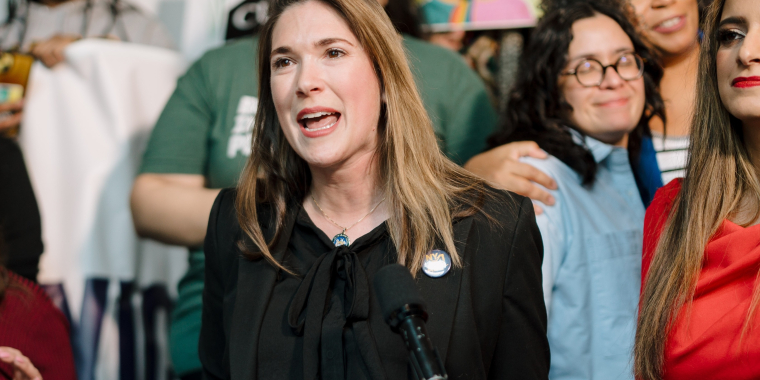

Hinchey Bill to Ban Non-Consensual Deepfake Images Signed into Law

October 2, 2023

KINGSTON, NY – Senator Michelle Hinchey today announced that Governor Hochul has signed her bill (S1042A) into law, making it illegal to disseminate AI-generated explicit images or “deepfakes” of a person without their consent. Deepfake porn involves creating fake sexually explicit media using someone’s likeness. Those found guilty could face a year in jail and a $1,000 fine, and victims have the right to pursue legal action against perpetrators.

Senator Michelle Hinchey said, “New York has made significant strides to combat revenge porn and the non-consensual sharing of intimate media online, but there are bad actors who are getting around these laws by exploiting AI tools to generate fake intimate images of people they don’t even know. This is an entirely new realm of digital violation that demands vigilant attention and new legislative protections. That’s why we’ve taken decisive action not only to outlaw this malicious practice but also to broaden the ban to anyone who circulates fake intimate images without a person’s consent. My bill sends a strong message that New York won’t tolerate this form of abuse, and victims will rightfully get their day in court. I thank Governor Hochul for signing it into law.”

In 2019, New York joined 41 other states in making "revenge porn" illegal, which involves sharing explicit content without consent, often by ex-partners, to harm victims. However, New York State law has lagged behind in addressing the rise of online deepfake porn, where real people are portrayed in fake situations by perpetrators unknown to them. Hinchey’s bill is the first action to advance these specific protections in New York.

According to an MIT Technology Review report, the majority of deepfakes target women. Sensity AI, a company tracking online deepfake videos since December 2018, reports that between 90% and 95% of these videos are non-consensual porn involving women. A report published by Sensity AI in 2021 estimated that there were more than 85,000 harmful deepfake videos detected up to December 2020, with the number doubling every six months since observations began in December 2018.

###

Share this Article or Press Release

Newsroom

Go to Newsroom